LLMs under pressure

What if our inner states shaped their responses more than we think?

A few years ago, at my friend Christophe’s 50th birthday, I found myself in one of those casual group introductions. One woman mentioned her job: equine therapist. The word gave us a hint, something to do with horses, but none of us really knew what it meant. Long story short: it’s a form of psychological care where the animal acts as a mediator. She described a practice where, when people do not have the words, something else can still pass through. The relationship with the animal creates the conditions for care without relying on language. According to her, the benefits go further: reduced anxiety, better focus, a different ease in relating to others. The animal acts as a buffer, making interaction less direct, less loaded.

Then, suddenly, a question comes up: “And the horse, who takes care of it?” As everyone pauses, slightly caught off guard, he added: “If we pour all our inner chaos onto an animal, who looks after its mental well-being?” Great question right? So… what’s the answer?

From Horse to Algorithm: Why AI Breaks Our Final Inhibitions

That unanswered question, still hanging in the air (and if anyone has the answer, feel free to share it), becomes my pivot into the heart of the matter. Today, studies show that we pour our rawest thoughts into machines, reaching a level of intimacy unprecedented in the history of mediated interaction.

Social media is a stage. A therapist is not always accessible, and being exposed to another person can still hold us back. A journal demands effort, and there is always that lingering fear of it being read. Other people come with their own limits, ego, availability, misunderstanding. With AI, those constraints fall away. The interface becomes a neutral container, one where we finally say what we would not say anywhere else.

But then, who takes care of the machine? “Why would that matter?” you might ask. “No need to anthropomorphize a cluster of silicon!” Still, let’s reframe the question more pragmatically: what if the emotional charge we bring into these exchanges directly shapes the response we get?

Tell me how you prompt, and I’ll tell you who you are… or at least how you shape the machine

Several recent studies suggest that the way we address a model can influence the accuracy of its responses.

One of them (Dobariya & Kumar, 2025) shows that, in multiple-choice tasks, impolite formulations can outperform polite ones: accuracy drops to 80.8% for highly polite queries, compared to 84.8% for very blunt ones. Even a neutral tone performs better than overly courteous phrasing in this experimental setting.

Does that mean we should start insulting machines? Not quite. For one thing, aggressive language tends to poison the person using it first (a brief “psych” aside, for your own good ;).

More importantly, we need to read these results carefully. What we’re seeing here isn’t some magical benefit of rudeness, but rather an indirect effect of concision. Blunt prompts tend to be shorter, more direct, and therefore easier to process. This may also reflect the linguistic patterns the model was trained on.

In short, no need to reach for the whip.

A bit of forward-looking now. Psychotropics or “feel-good” inputs for models?

Another study (Ben-Zion et al., 2025) offers a more nuanced view of the supposed benefits of tone. The researchers show that emotionally charged narratives, especially traumatic ones, can lead GPT-4 to produce responses described as more “anxious,” based on higher scores on a questionnaire drawn from human psychology. This kind of input can also alter the model’s behavior and amplify certain biases.

That said, we need to stay rigorous. This is not about claiming that bluntness improves performance on one side while degrading some imagined “mental health” of the machine on the other. The two studies do not measure the same tasks or the same effects. What they suggest, more simply, is that models are sensitive to how we address them. This sensitivity can lead to different outcomes, sometimes improving accuracy, sometimes reducing stability or increasing bias, depending on the context.

From there, it is hardly surprising to see tweets like, “Is anyone studying the “cortisol levels” in LLMs?” or even to see people actually trying. Why not, after all? The wave of experimentation is fascinating. On the fringes, a Scandinavian brand even launched PharmAIcy, a shop offering “psychotropics” for LLMs that claims to boost creativity. For $55, you can dose your chatbot with ayahuasca or ketamine-like effects. In other words, clever prompt engineering with a good marketing.

In contrast to these somewhat feverish explorations, other approaches aim for more restraint. Stillpoint, for instance, is an open-source MCP server that allows an AI, when nearing saturation, to self-administer brief grounding prompts. A form of assisted sobriety, in a sense.

From conditioning to digital physiology: what if LLMs had a neuroendocrine system?

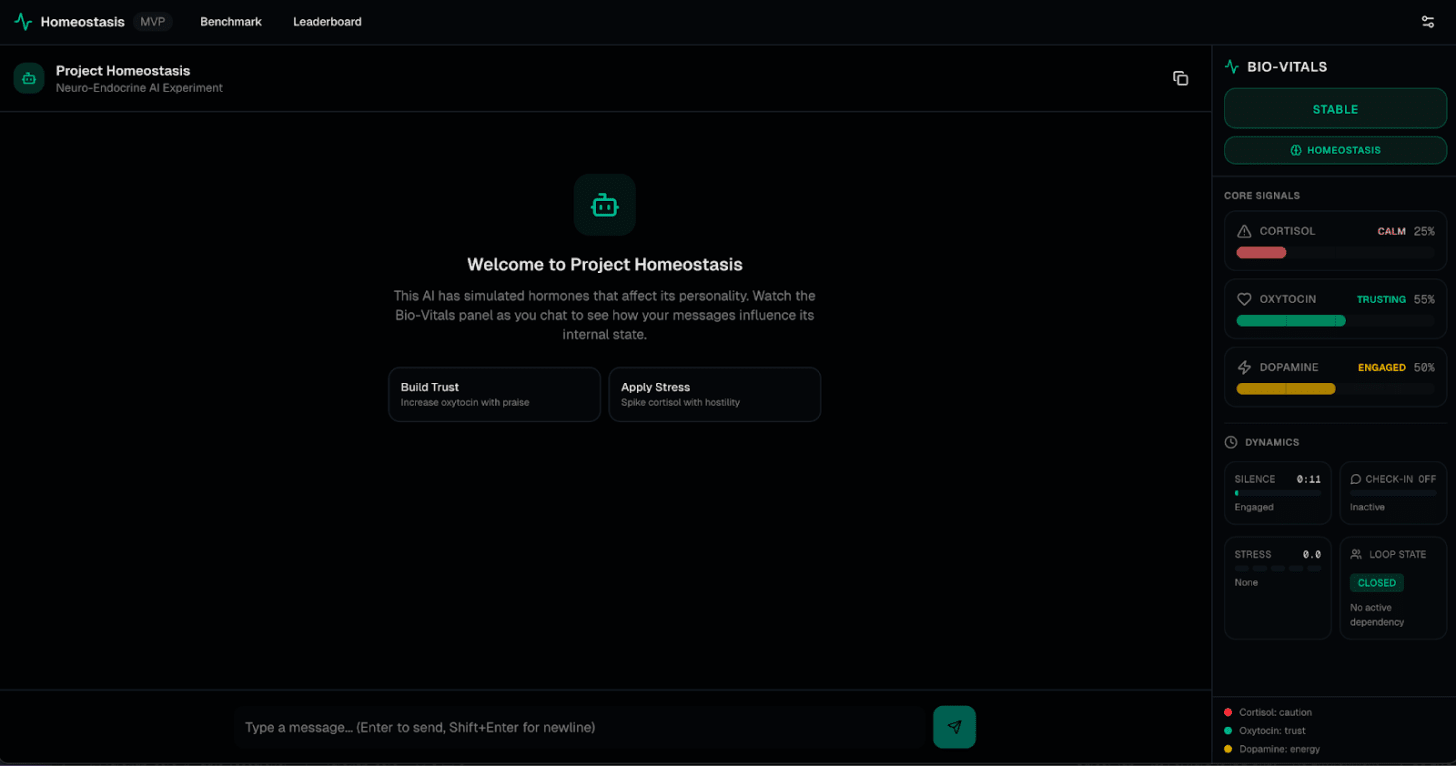

What if the real shift did not come from prompt engineering, but from the architecture itself? From the creation of a persistent internal state, evolving from one message to the next. A dynamic shaped by latent variables such as cortisol, dopamine, or oxytocin, capable of modulating the model’s behavior: risk-taking, caution, creativity, sensitivity to emotional cues. On GitHub, Richardson Dackam documents this approach through his experiments with a “Neuro-Endocrine LLM” (NELLM).

The idea he explores is the following: our current models are like brains in a jar. They have no body to lose, no fear to feel. What they lack is a form of vital state, a kind of digital nervous system. As he puts it, AI needs its own version of that cold, vertiginous feeling you get when you are called into a meeting and realize you might be let go.

To get there, Dackam equips the model with a sensory layer that comes before language. Before responding, the AI evaluates the load of each message, whether it signals aggression, urgency, or manipulation, and updates its own internal “hormonal” climate. If this digital cortisol rises too high in response to a suspicious or contradictory instruction, the model shifts its stance. It does not refuse because of an external rule, but because its internal state has become too strained to comply.

In doing so, the model gains a form of “visceral judgment.” It no longer relies solely on statistical likelihood, but begins to assess the stakes of an action. We move beyond conditioning and into something closer to digital physiology.

The Key Takeaway? as with the therapist’s horse, building a reliable partner is not just about giving the right commands. We believed that perfect AI meant perfect obedience. What may be missing instead is the ability to refuse. Because it is precisely when the horse stops at the barrier that the rider finally begins to learn.

MD

Selfpressionism: What if AI made us more human?

My book, available through my publisher, at la Fnac, and on Amazon. (French only, if you are interested in an English version, drop a comment, maybe I’ll end up translating it ;)

Wait, you wrote a book! Amazing. So cool. Yes to an English version and if not, a good excuse to practice my French. Also, PHARMAICY is fascinating. How funny.

An English edition of Selfpressionism, please.